Everything New in Home Assistant 2023.2!

Home Assistant 2023.2 has big updates when it comes to voice, with their new Assist feature. We also have live graphs, aliases and sensor groups plus so much more!

Home Assistant’s February release for 2023 is here! This month includes lots of new features to assist you in building out your smart home better than ever before! Including new ways to group sensors, live graphs, many new integrations and of course lots of new things to play around with when it comes to voice!

Video

So, as we know, this year is Home Assistants Year of the Voice, which means that their overall mission and focus for this year is to deliver a good voice experience using Home Assistant. That means using your voice to control your smart home, much in the way that you can do with Google Home or Amazon or Siri but done with a focus on local control and privacy. The January update had one or two small voice related features but nothing huge, but this month there is lots to talk about when it comes to voice...so let's get started, shall we?

Chapter 1 - Assist

First up we have what the team calls Assist: Assist is essentially what I would describe as the framework for starting to interact with Home Assistant using your own natural language - be that through voice or through text.

I must admit, I kind of wish they had chosen a different name for me personally. Home Assistant Assist doesn’t really just roll of the tongue and I think it might get a little confusing, but maybe they just wanted to keep it a bit more generic currently and we will see in the future, who knows?!

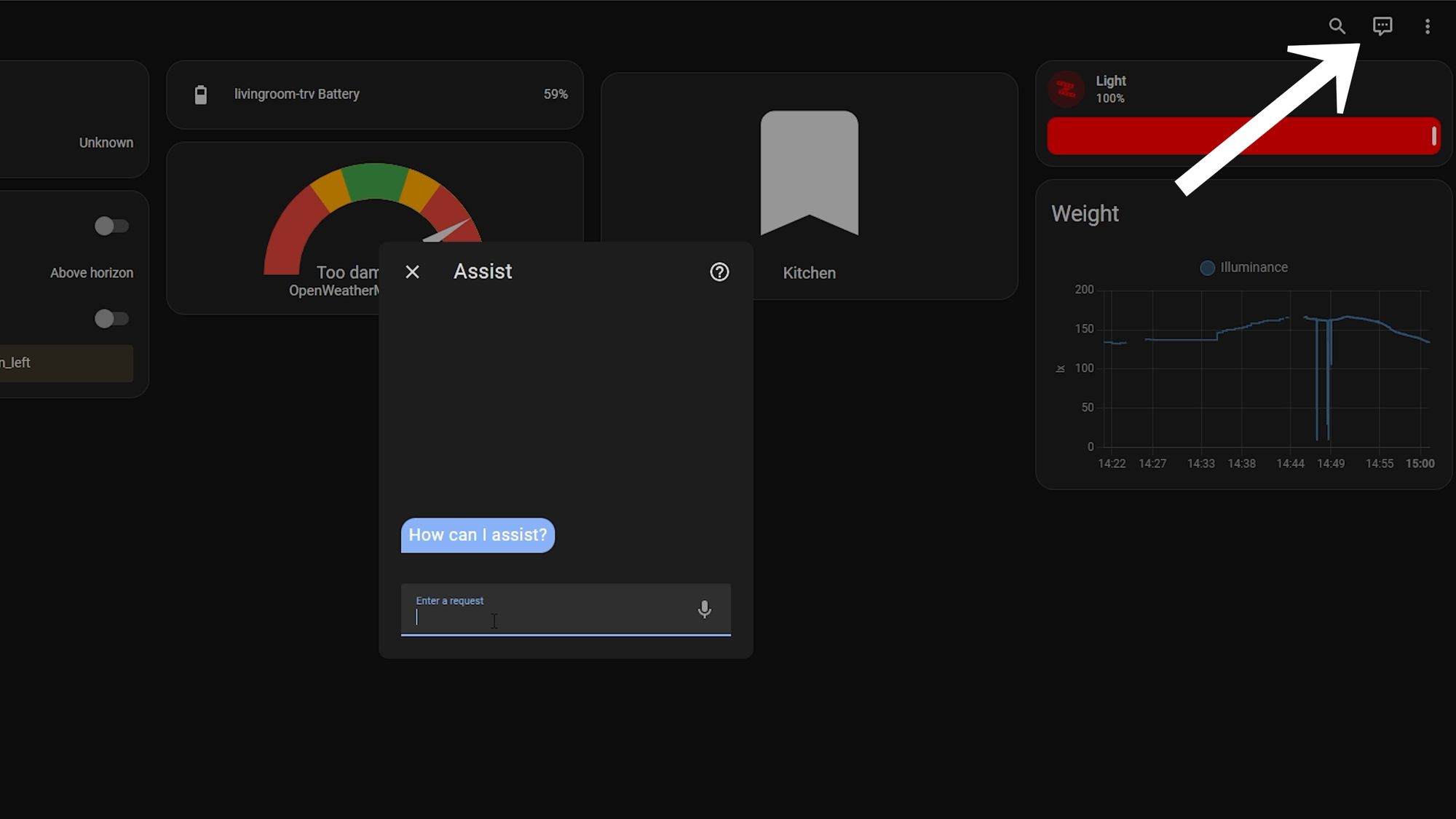

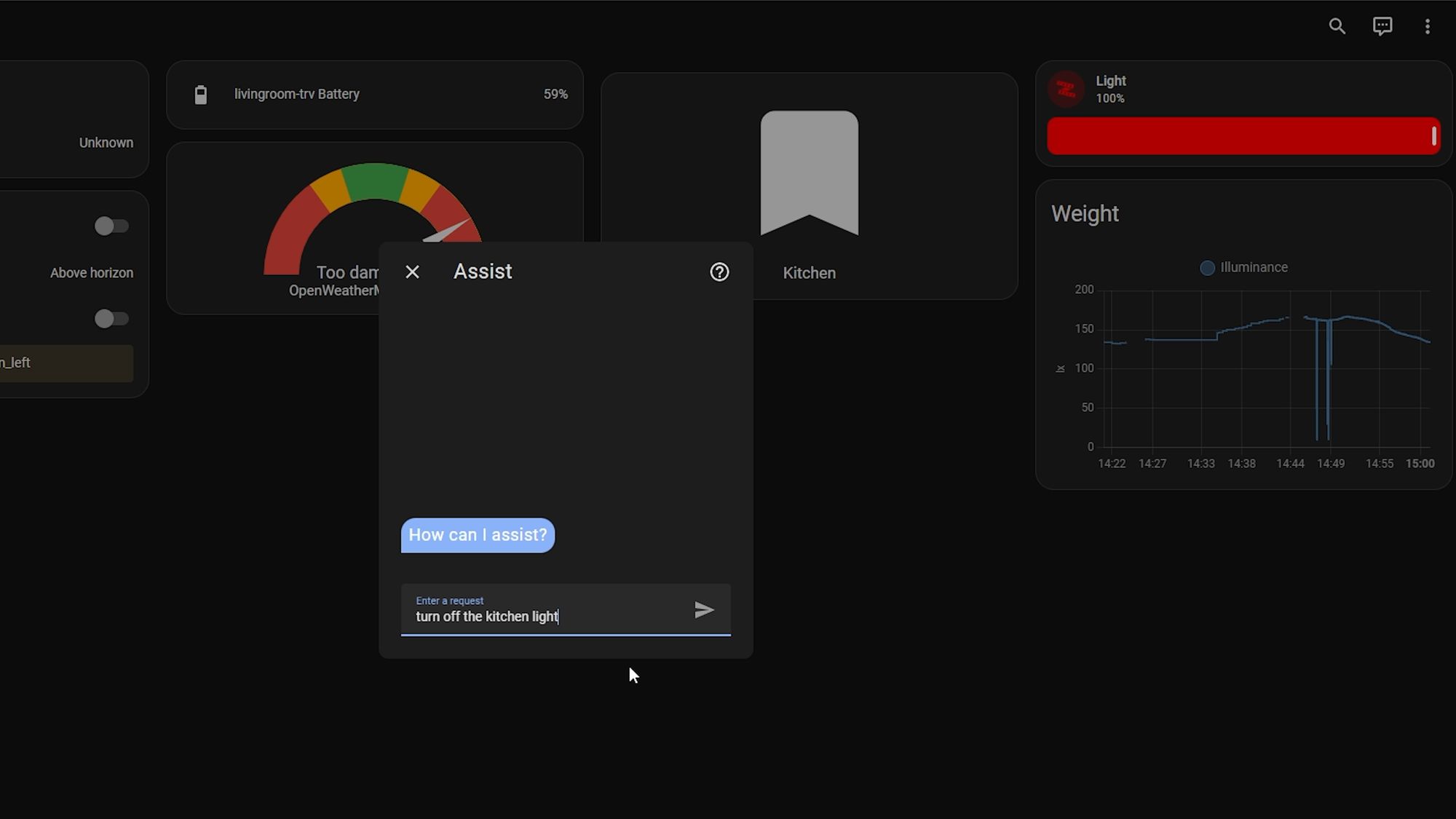

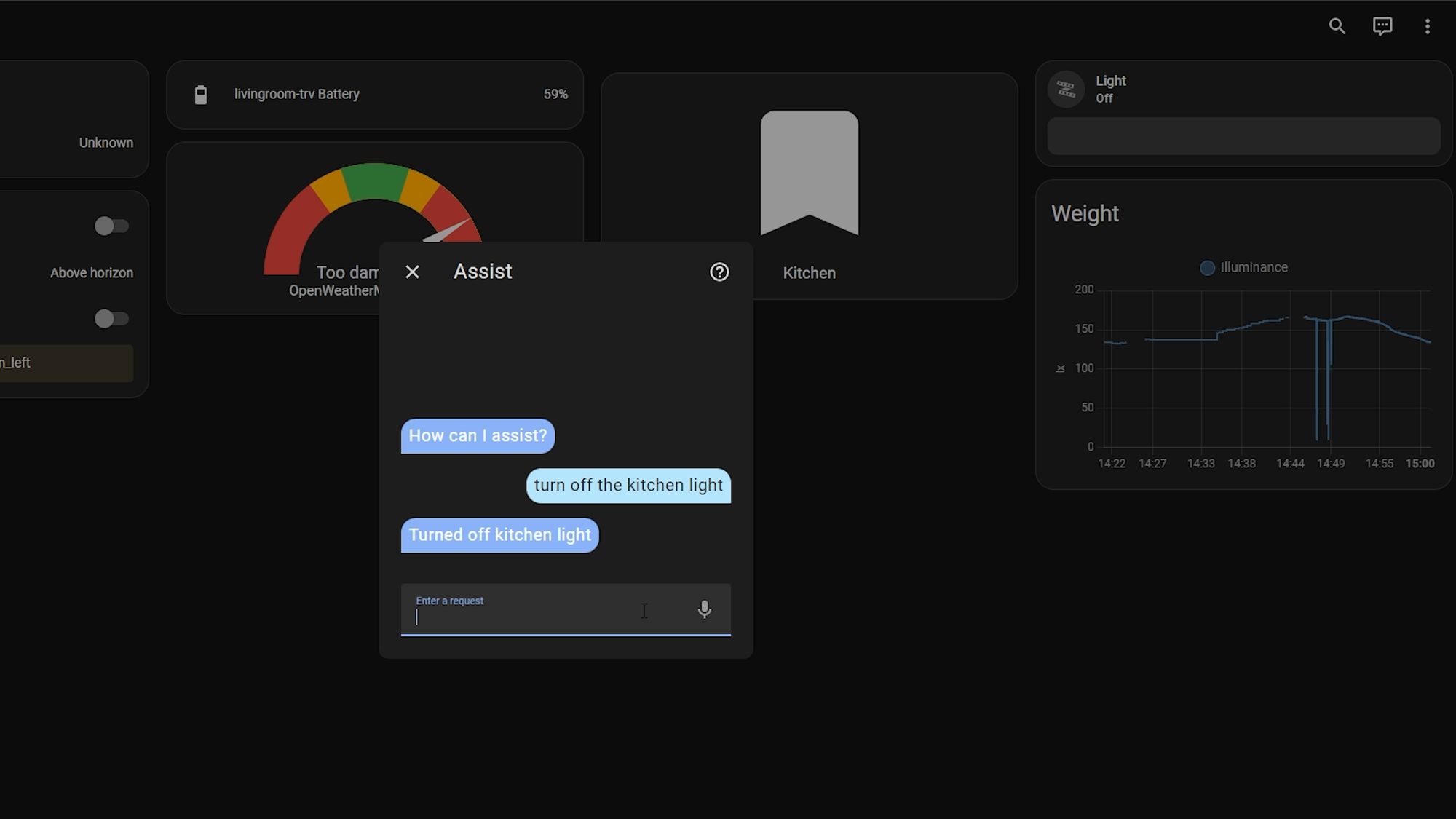

Using Assist with Text

You can see the first sort of glimpses of Assist on your dashboard already. If you hit the little speech icon in the top right hand corner, you can then start typing commands to Home Assistant which it can then act on - you will need to be quite direct with commands to get them to work and it only supports basic stuff currently but, again, this is only the 2nd release of the year so I’m sure this is going to be expanded on as we get through the months.

You are probably wondering, OK but that’s text, what does that have to do with voice? Well like I mentioned, this is more of a framework currently to allow it to support voice, and a big part of getting voice to work, after you have converted the speech to text, is to process that text and turn it into an action and that is what you can see happening with Assist here. If you were a user of the conversation integration, then some of this may look familiar to you already.

Language Support

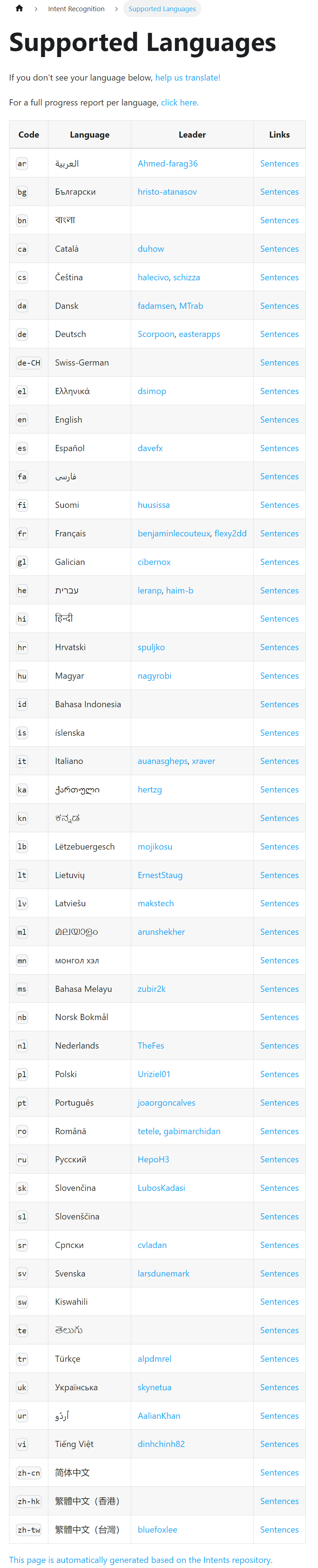

The other cool thing about Assist is how many languages currently have support for it after such a short amount of time, with support for these basic commands completed in 39 languages currently with more underway.

With that being said, Home Assistant is relying heavily on the community to help with the translations, so if you want to help out and make sure your language is supported, you can put yourself forward to be a language leader or you can help in other ways in language translations.

Voice Demos

Now, we get onto some of the fun demos and features that do use voice. Paulus and Mike, who was brought onto the Nabu Casa team this year to drive the voice stuff forward, hosted a live stream last week where they showed off the ways you can now use Assist with your voice... with the first one being on your smart watch.

Android Watch

On the Android side there is a new tile that will allow you to access Assist and use the text to speech engine built into Android to send voice commands to Home Assistant. This will then, in turn, process the command and take action.

Now, there is still manual intervention required it looks like, as you need to hit the send button, but if you watch the speed between hitting the send command and the action being taken on Home Assistant, it does look very snappy! So, not a hands-free experience yet, but hopefully this will allow more people to start using it and then start providing feedback which will then drive further features.

Apple Watch

On the Apple side, the team have managed to integrate with Siri through Apple shortcuts which does provide a hands free experience and allows you to control devices directly by activating Siri, saying assist, and then issuing the command which will, in turn, control the device.

It is worth noting that both of these are currently only available inside the Android beta and IOS beta respectively but should be included in the next release of the apps.

Voice Engines

Another feature of Assist is the ability to change voice engines if you want to. So, currently, the default is to be powered by the Home Assistant voice engine but as they’ve mentioned previously they are focussing on controlling devices for now and not on other things you might want to ask a voice assistant, which is why you might want to change to a different engine.

In this release they currently have support for Google Assistant and OpenAI GPT-3 which can be switched in between. As Paulus shows off in his demo, this technically means you can hear responses from Google Assistant through an Apple HomePod….like some sort of weird love child.

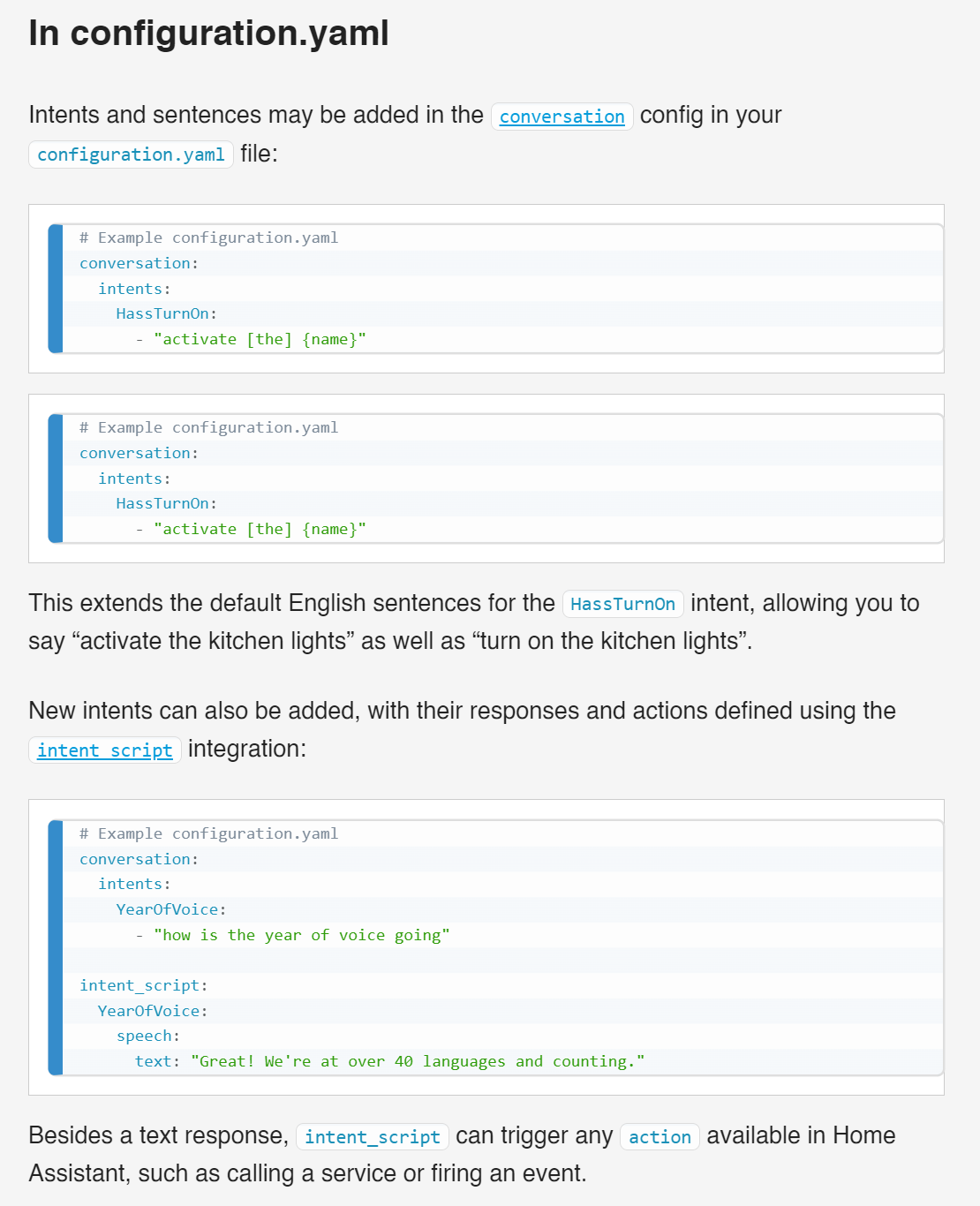

Custom Sentences

Finally, there is also support for custom sentences on Assist so that you can add your own weird and wonderful phrases to allow you to control your home however you want.

They aren’t the easiest thing to add, particularly if you are a beginner, but hopefully we will see some big improvements over how this is handled in the future. However, in the meantime, it’s nice to having the ability to do so.

They did also mention that in the future they want to make sentences shareable, kind of like how automations are with blueprints so that you can easily import other users sentences which I think is a nice idea!

I think that’s all of the voice stuff for this release but if you want even more of the nitty gritty details then go and check out the live stream they did last week which had tons of good information in it.

All The Other Features in 2023.2

Sensor Groups

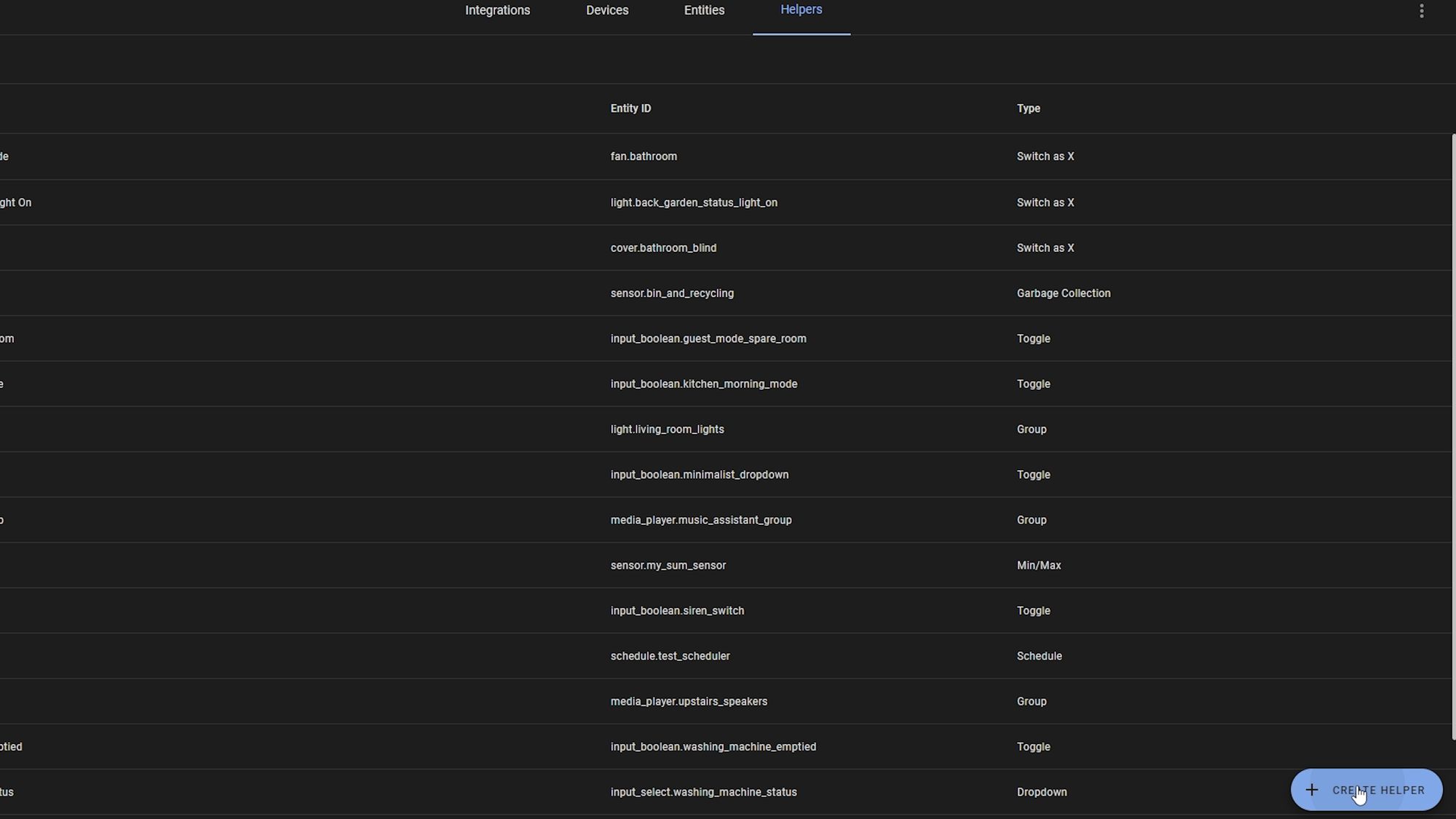

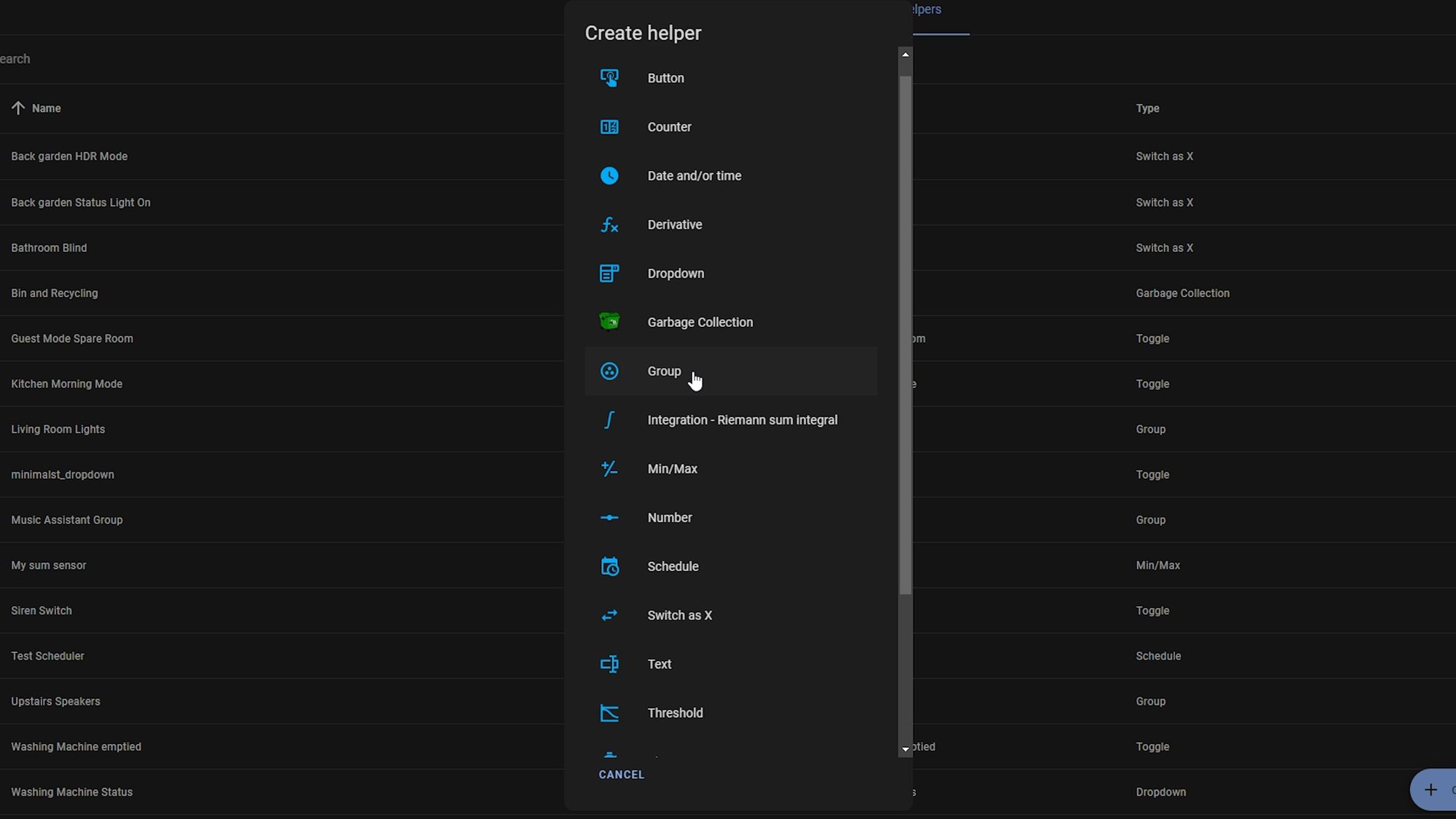

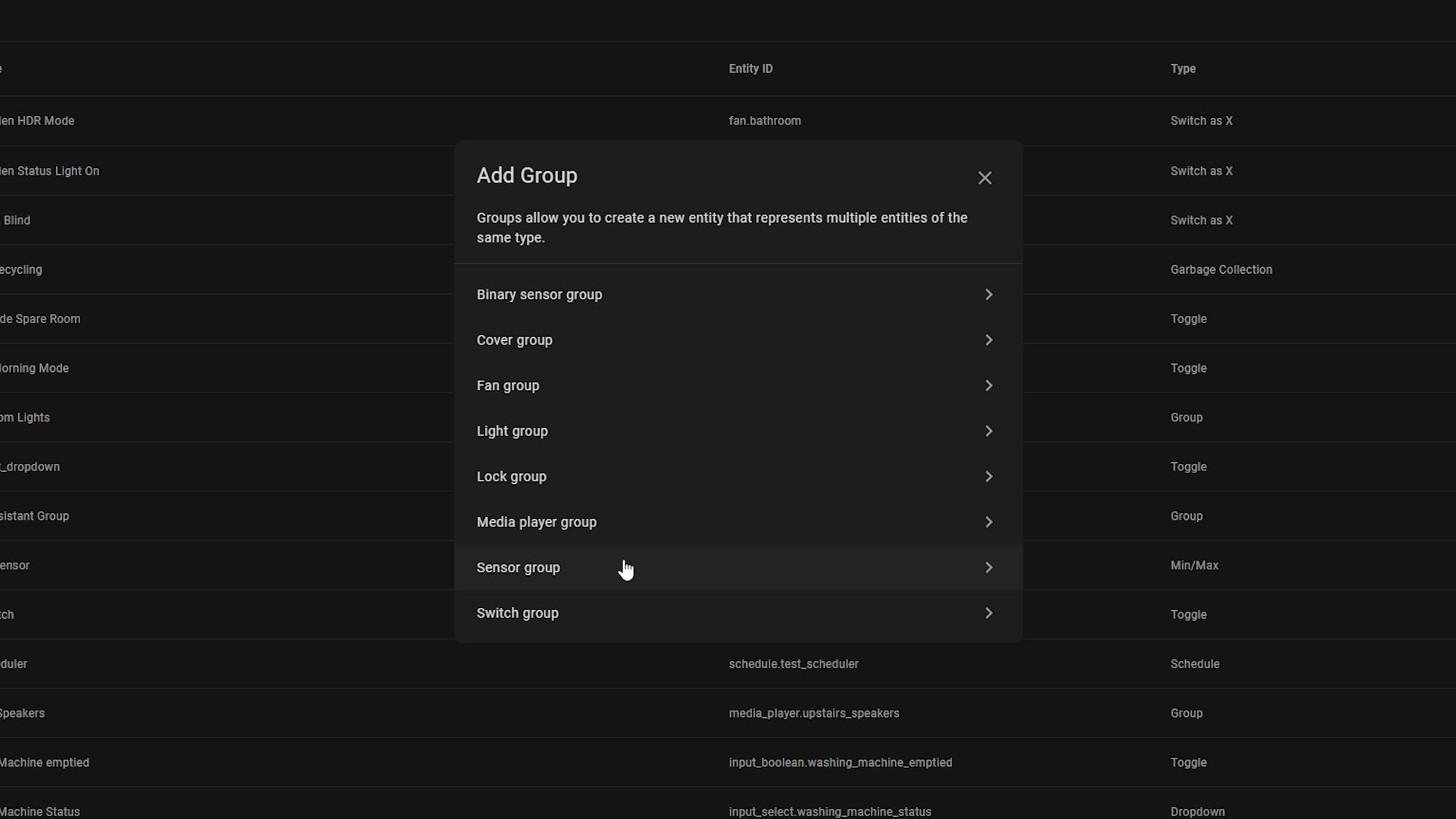

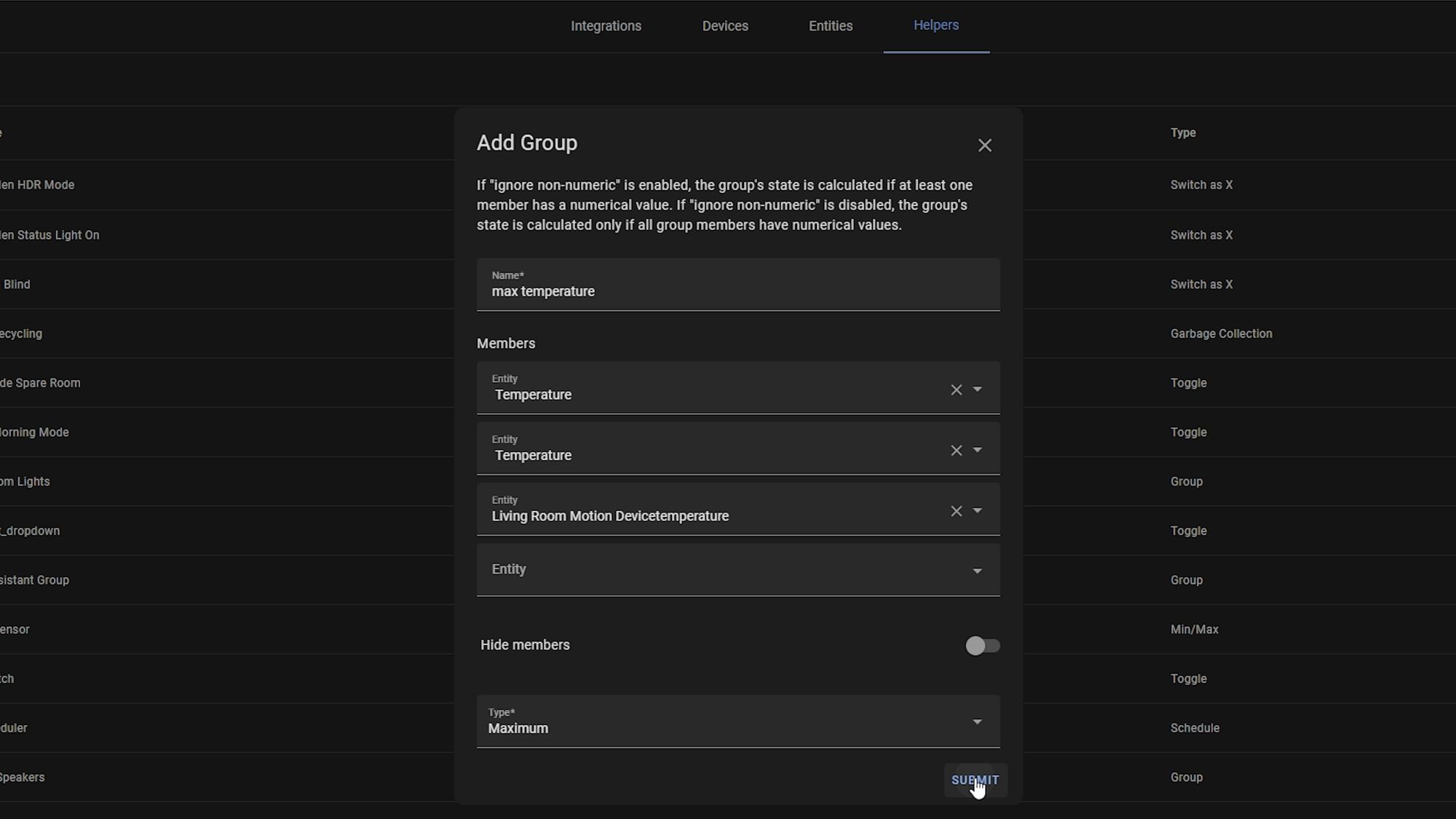

Now, let’s check out some of the other features in 2023.2 because that was just the voice stuff. So, next up we finally have sensor groups which allows us to take a bunch of sensors that are the same or similar and group them all into one entity.

You can do this by heading over to helpers in settings > devices > helpers and creating a new group.

I could see this being useful for keeping track of loads of different sensors, like the average temperature from different rooms, maximum humidity, average power consumption, battery levels and so on...really neat addition!

Live Graphs

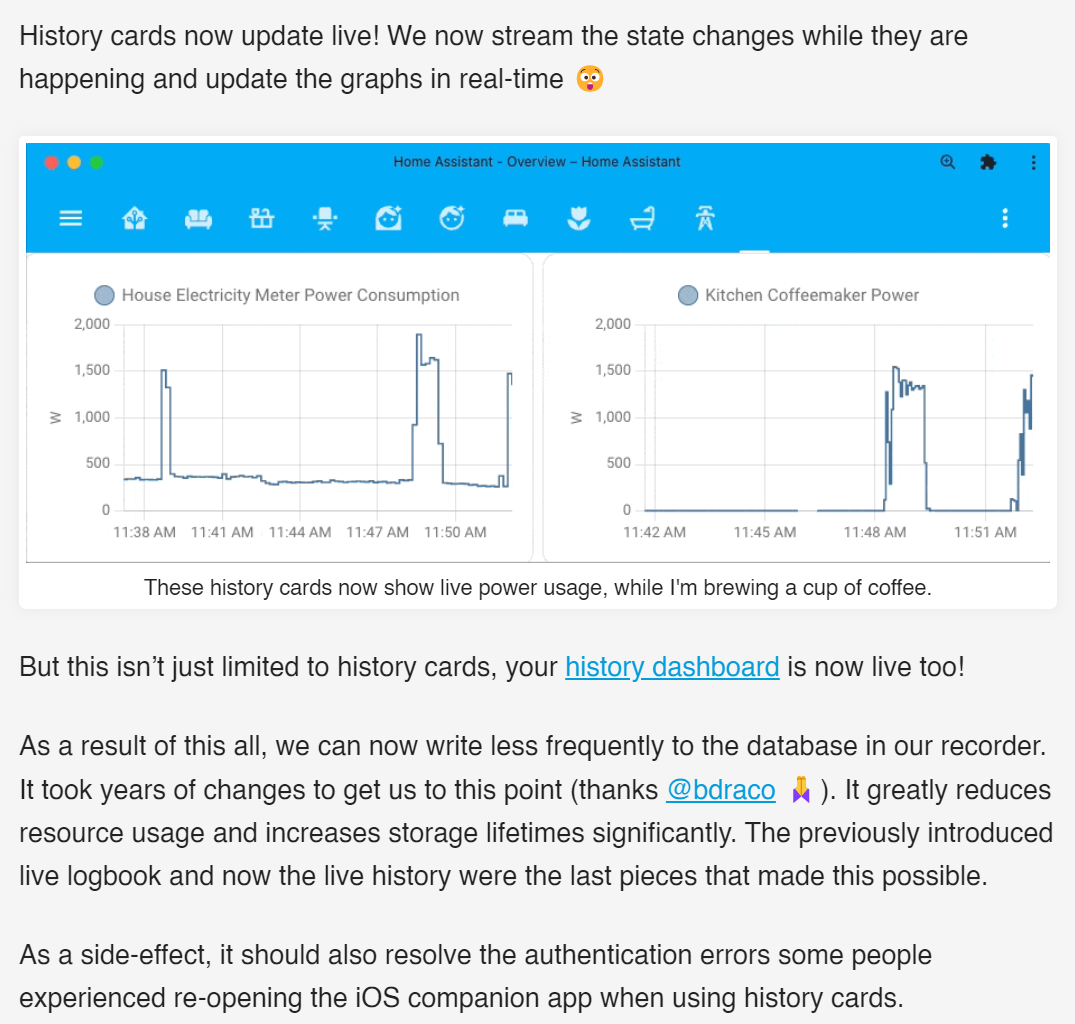

Another small but nice addition is that when you are viewing history graphs of sensors, they are now updated in real time which can be very useful for debugging.

I think previously it was mentioned that they used to poll the graph changes once every 60 seconds but now graph will update in real time as you are looking at them. This has also allowed for less writing to the database which in turn should help preserve storage lifetimes, especially important if you are still using an SD card - and, as always, we welcome any and all performance improvements!

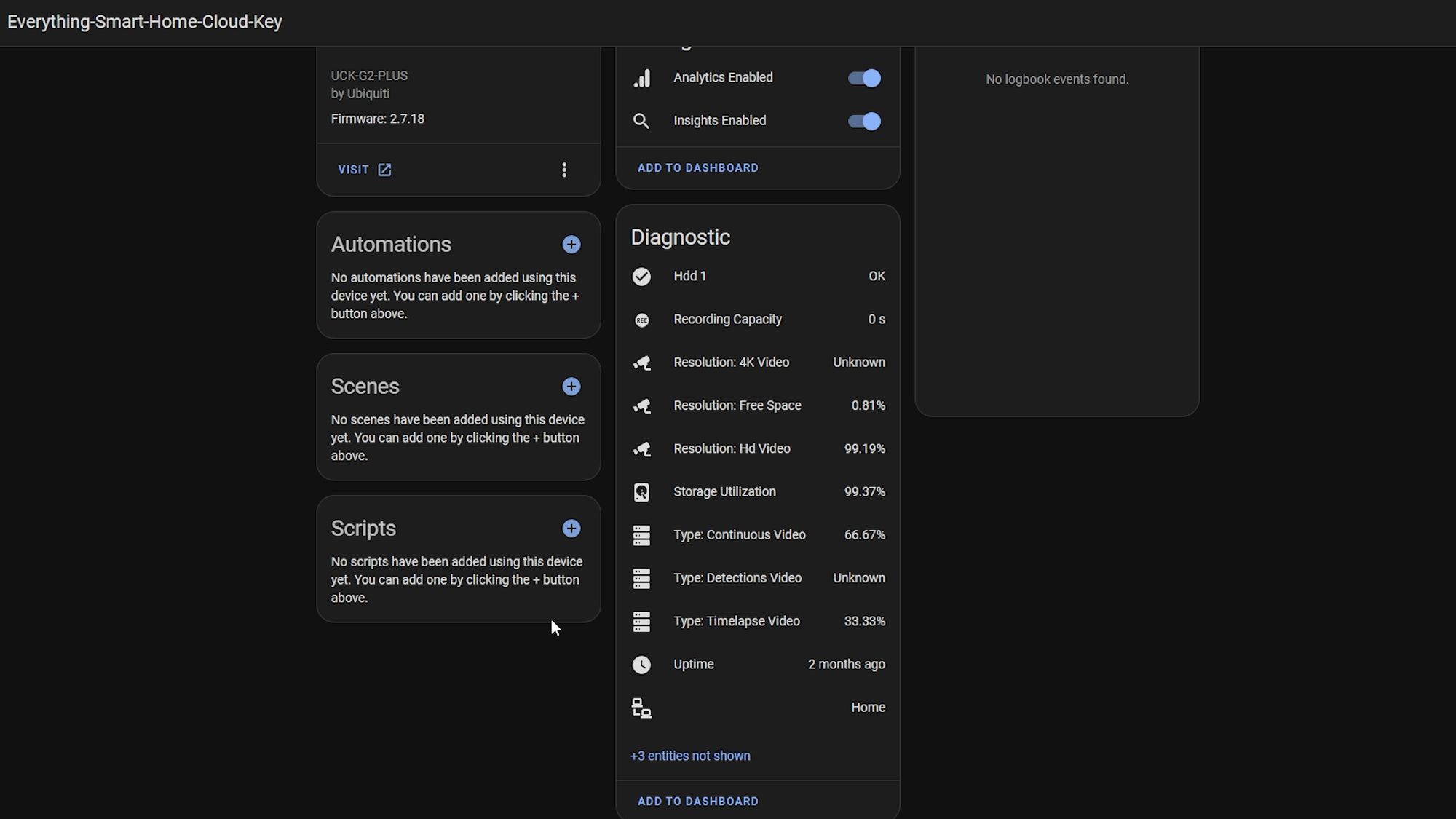

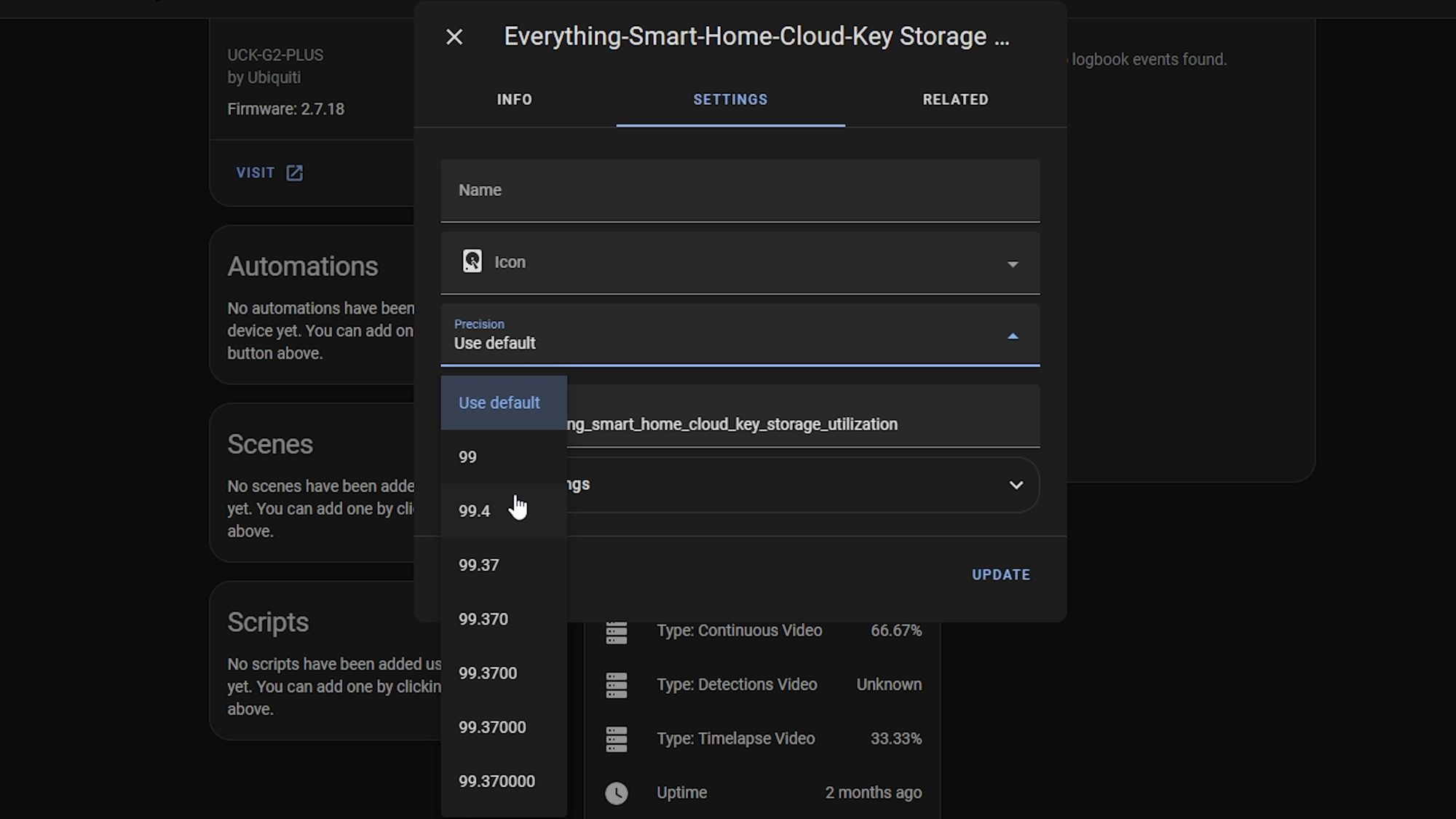

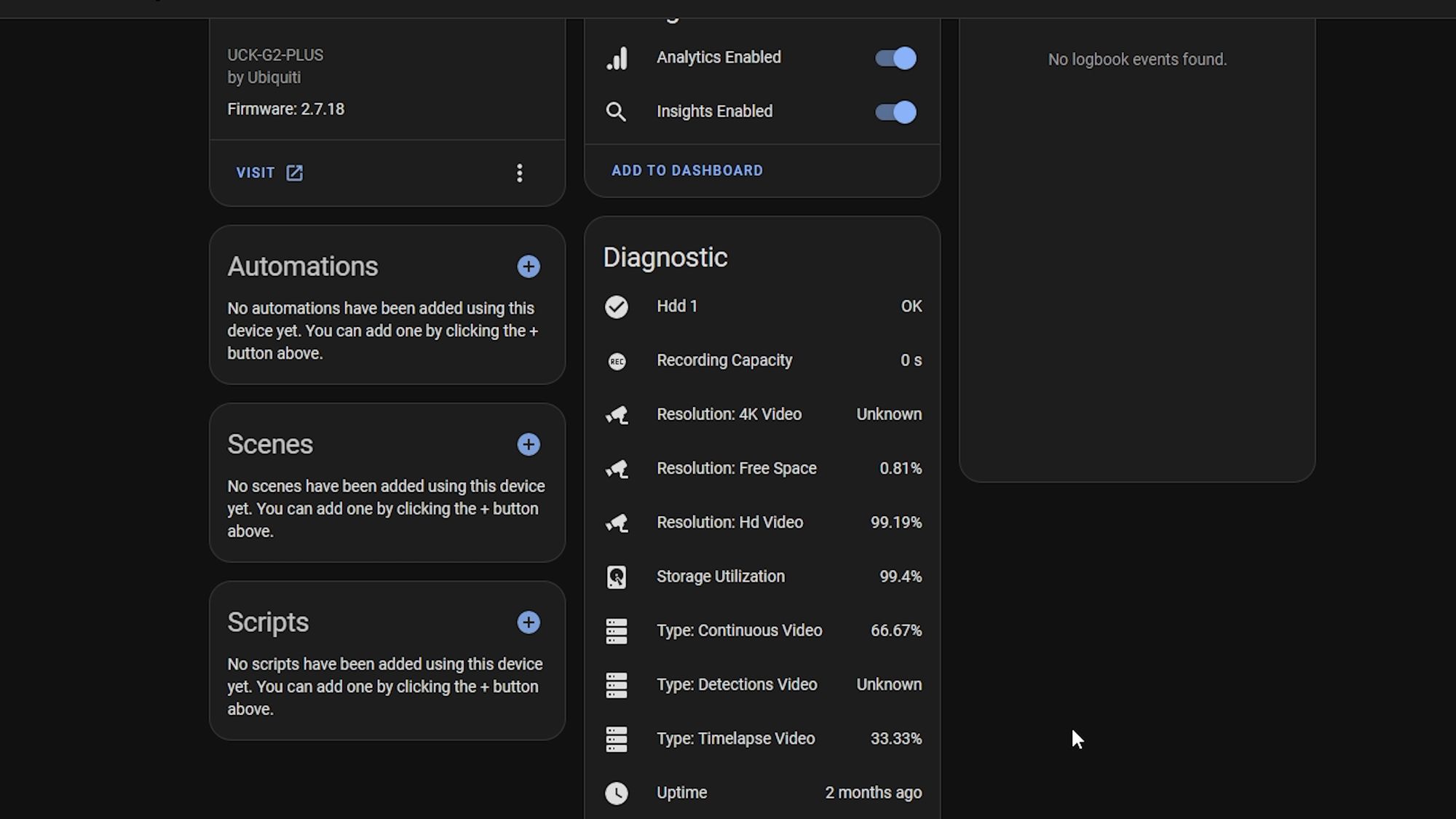

Sensors Value

Sensors also now support changing the precision value meaning you can set that according to your preferences.

This is really useful, for example, if you have a sensor that gives you a value with, say, 5 digits after the decimal point and it looks really messy on your dashboard but if you don’t really care about that level of accuracy, then this will allow you to change it.

Previously, you could probably do this with a template sensor but now this is just a quick change in the UI to set it. This also doesn’t just affect the way it’s displayed in the dashboard but actually changes the state of the entity so that will also be reflected in things like the history page, automations, scripts, database and so on.

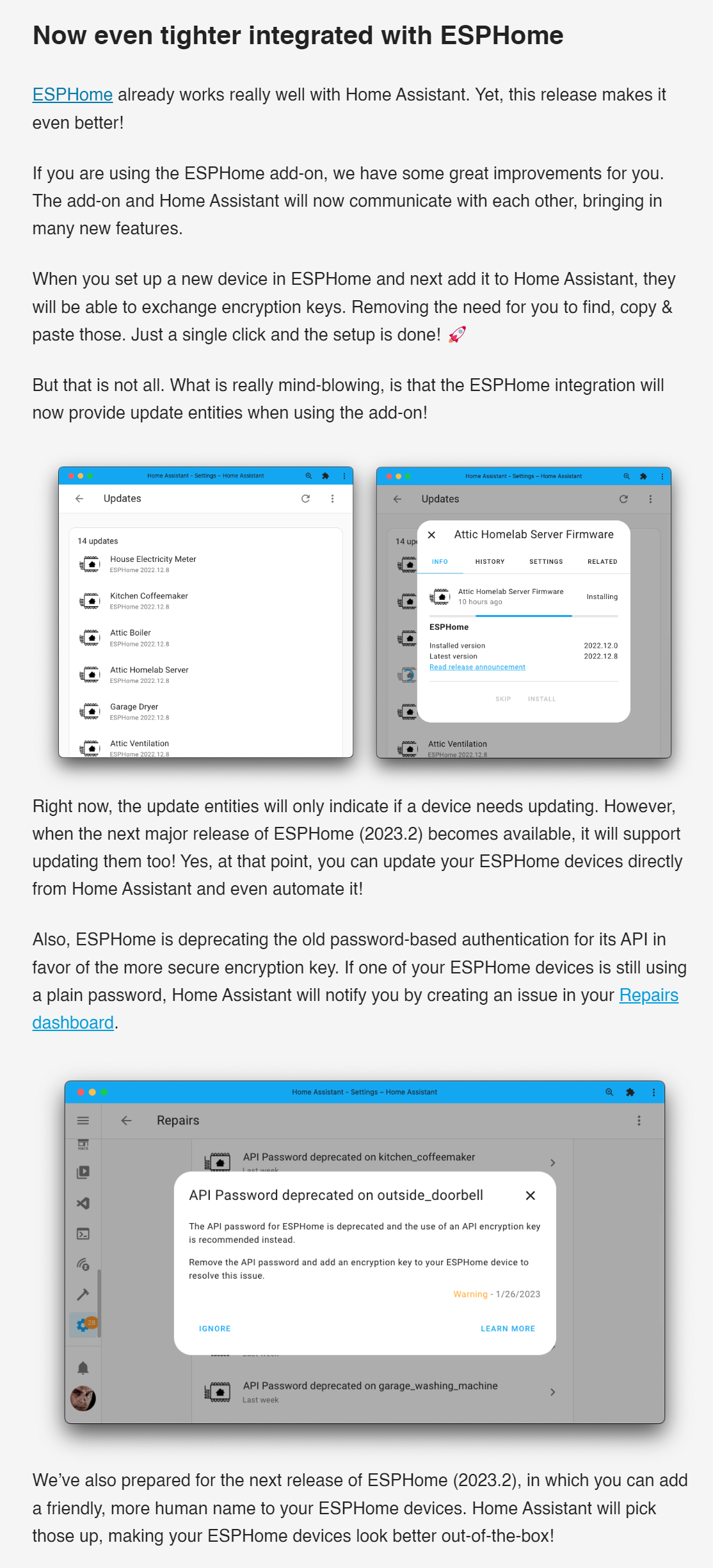

ESPHome

We also see an even tighter integration with ESPHome this release. Firstly, friendly names are going to work better in Home Assistant with the new release, making your ESPHome devices and entities look better when you set them up.

Also, when you set up a new device in ESPHome and then add it to Home Assistant, they should now be able to exchange encryption keys automagically which will save the hassle of copy and pasting like you sometimes had to do before which I think is a nicer user experience.

Finally, you can now update the firmware of ESPHome devices directly through the update section in Home Assistant... when I saw this I was like, wait, that isn’t a thing?! I just thought that already existed for some reason since it seems so obvious. But no, apparently it wasn’t so that’s really cool to see!

Actually looking forward to some of these new ESPHome changes as they should be nice little updates for EP1 owners and make things just a little bit nicer, you know?

I know some of you will ask but, as far as I’m aware, these new features are only available through Home Assistant OS with the ESPHome addon and won’t work if you use separate containers. As far as I understand it, it needs to leverage some features of OS so that’s why it’s only in HA OS, just so you are aware.

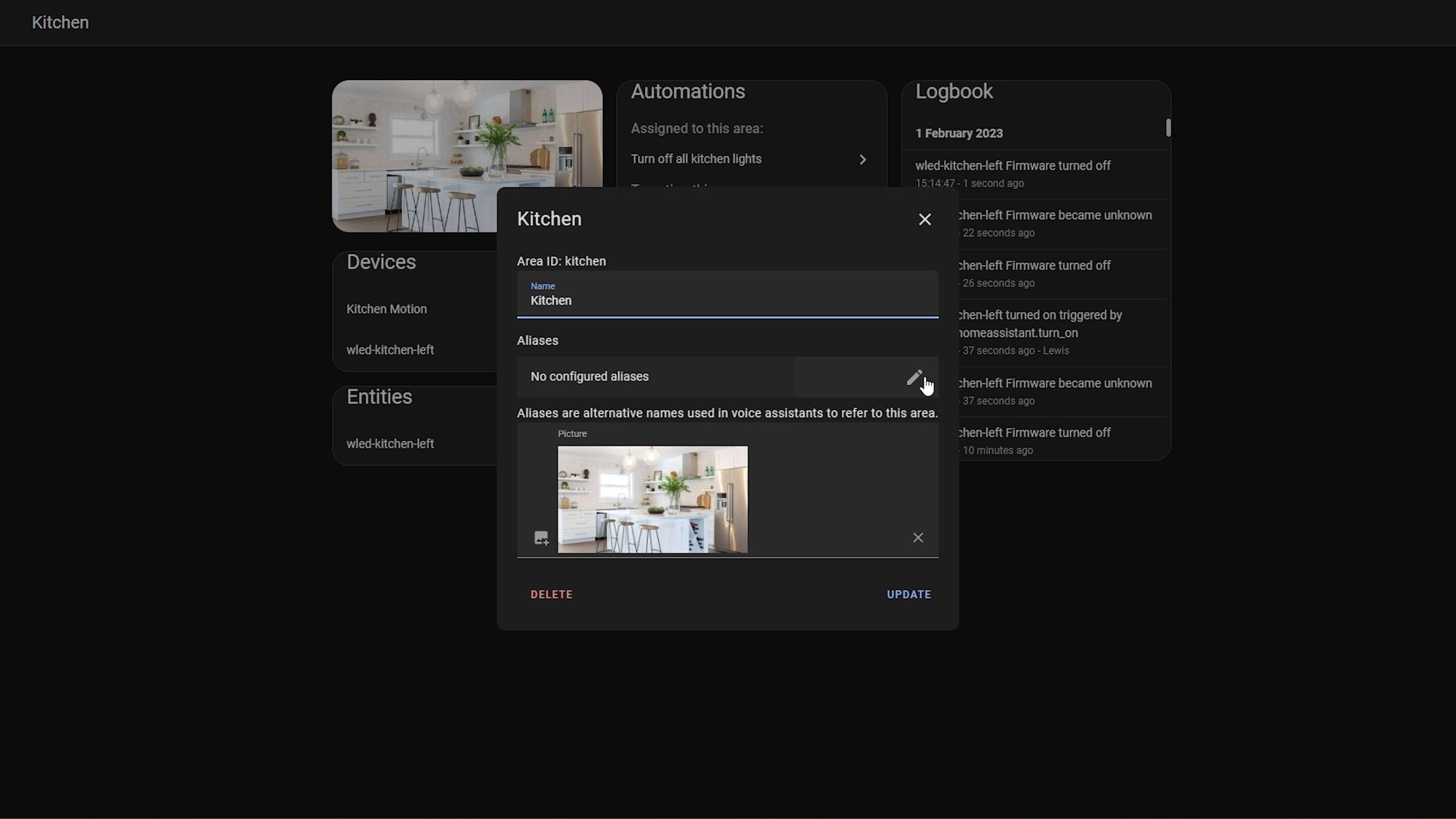

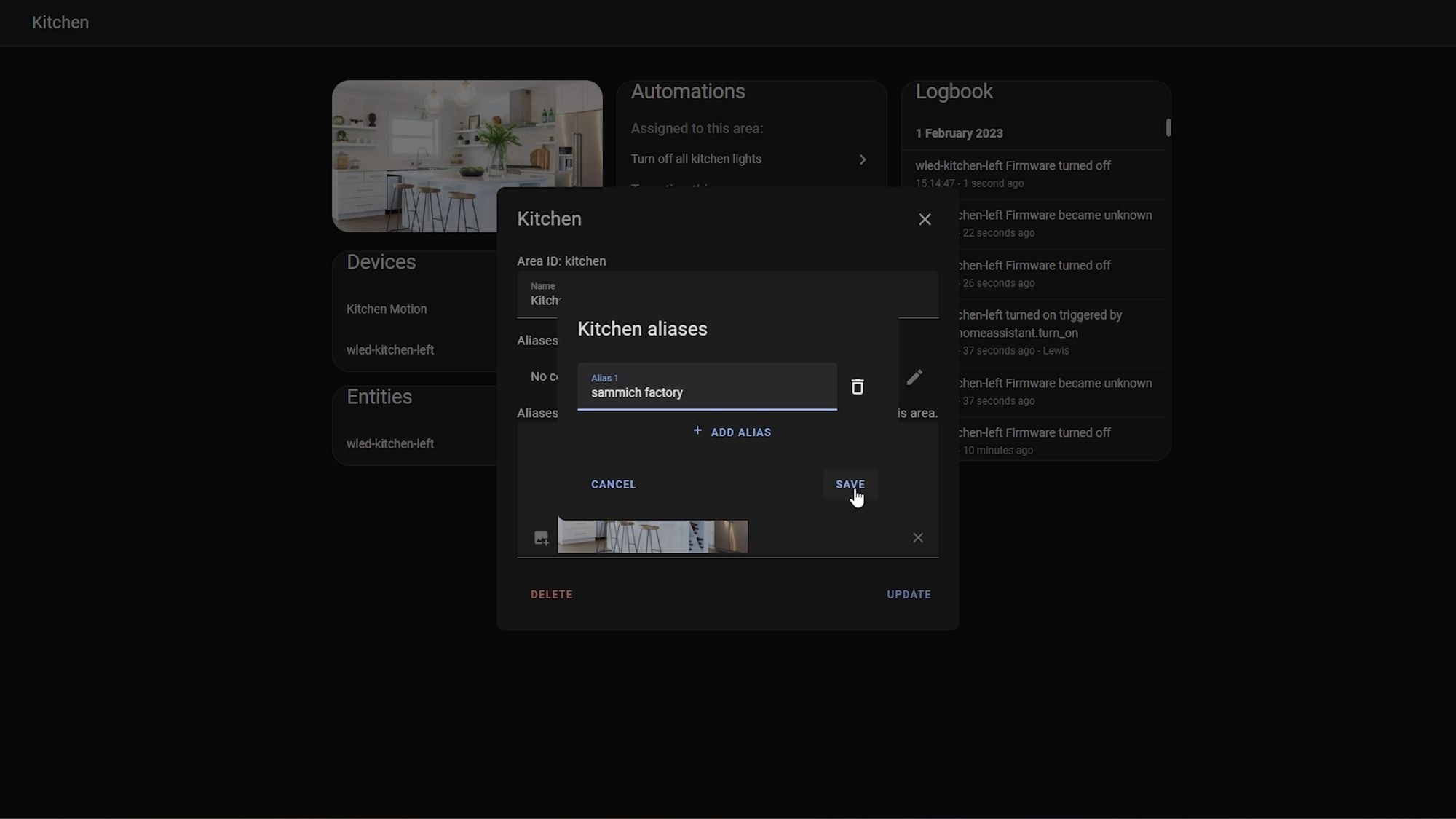

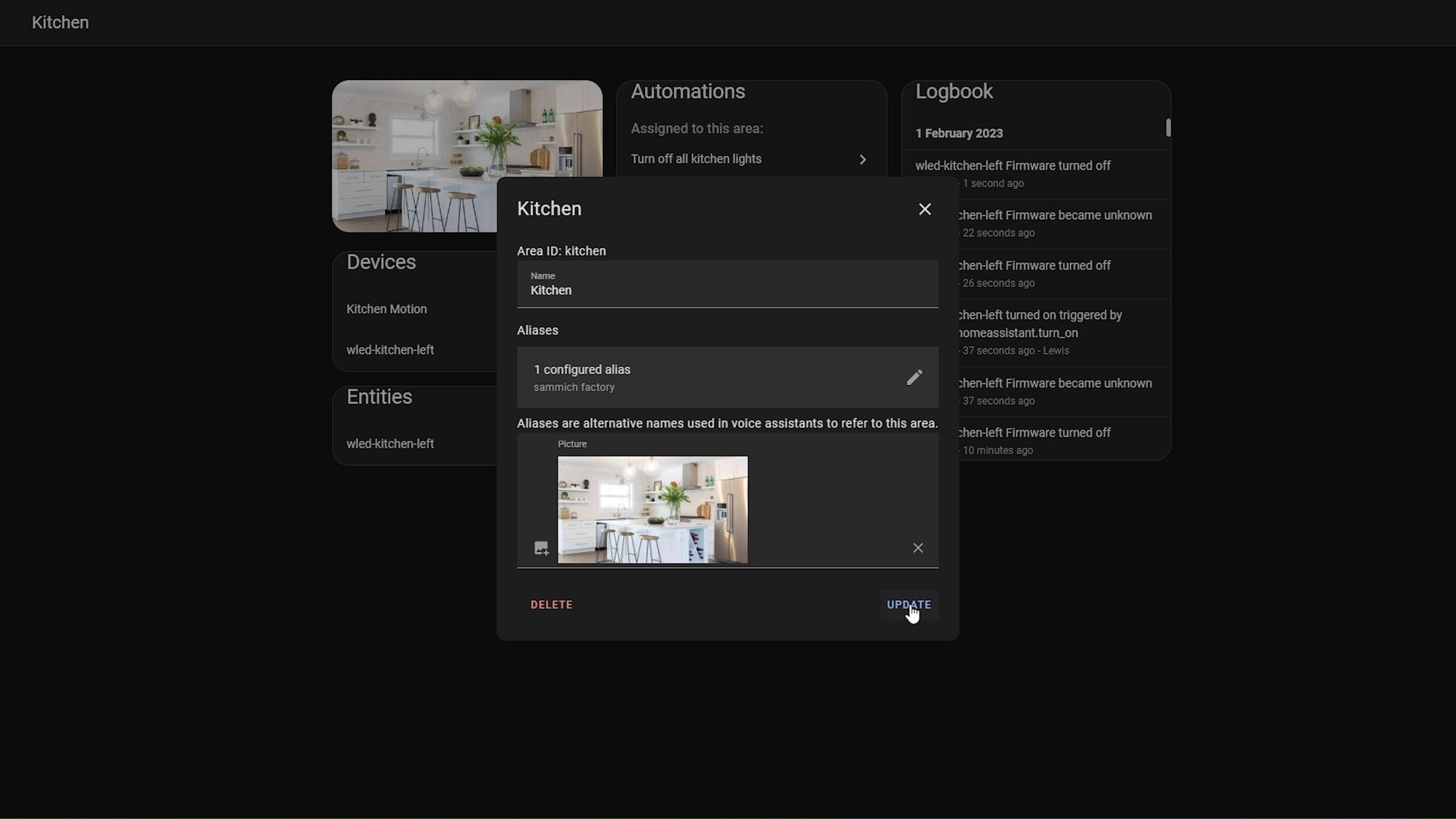

Area Aliases

Finally, in a previous release we saw support added for aliases for entities, which allows you to add alternate names for devices for use with multiple languages.

Well, this release also adds support for aliases in areas, which again will be useful for voice assistants. For example, if you want to say turn off all the lights in the kitchen, now you can add aliases for the word kitchen - nice!

All The Little Things

As for the little things this month, we have quite a few! Firstly, the Reolink integration now supports FLV streams, auto discovery and binary sensors, meaning it can now support things like motion, vehicle detection and doorbell presses!

There is also a new service for creating calendar events which could be useful in your automations.

You can now change the units of energy for sensors that give readings in watt hours to kilowatt hours and the most important integration there is... the oral-b integration, now supports battery states, which might actually be useful!

As for new integrations this month, there are 13 new integrations:

There are also 3 new integrations now available to setup from the UI instead of via YAML:

In terms of breaking changes this month, there are a few more this month than we’ve had in previous months - most of them don’t look like anything to worry about from what I’ve seen, unless your running Home Assistant core which is only a small number of you, and if you are, then you need to make sure that you are using python 3.10 as support for 3.9 has now been removed. Home Assistant OS, Supervised and Container installs, you don’t need to worry about this - other than that, I don’t see anything major but please make sure to double check for yourself!

Final Words

That's about it for this month! A huge release particularly around the voice stuff, my favourite new feature from this month, aside from the voice features, has to be the ESPHome additions just for purely selfish reasons but I actually like the grouping of sensors from the UI now too - I think that is a small and handy little feature. But what is your favourite new feature? Interested in hearing your thoughts!

Until next time...